We recently finished another DevOps work, this time in a Microsoft house, so we wanted to share with you the challenges we faced during our journey. As usual at Magentys the traditional DevOps engagement doesn’t take longer than 6-8 weeks depending on de complexity of the solutions and the DevOps practices to reinforce, here the DevOps level of maturity of the team is critical to accomplish this on time, as DevOps doesn’t consist on just implement a CI – CD pipeline with some tools and display some green builds on the screen, is also about the adoption of some patterns and practices from the development team.

As some of you know, Microsoft has a long time experience on Application lifeccycle Management and owns a bunch of very good tools that cover its main areas, these are:

- Project/Portfolio Management

- Source Code Management

- Build Management

- Release Management

- Test Management

- Package Management

So we did, proceeding as follows:

1) DevOps Healthcheck

There is no time to lose and we have to get ready for action so, previous every DevOps engagement, we sent out a pre-engagement questionnaire where the development team (sometimes is the Dev Lead, or Head of IT, other times just the team sit for 20 minutes and fill it up) answer some questions about what patterns and practices and tools they are using in the team/s in regards of Agile Development and Agile Delivery approaches.

In these areas we are targeting questions related to the principal areas of DevOps, which involve most of the main practices such as Source Code Management, Build Management, CI/CD pipelines, Quality Gates, Team Collaboration, Configuration and Provisioning, Cloud and others.

With this information in our hands, the second step is to define the level of maturity in each of the areas of DevOps and use it to draft a plan/s and accelerate its adoption.

2) Plans and Strategies definitions and reviews

DevOps is not just about bringing the best trendy and shiniest tools that allow the team to orchestrate each of the pieces of the software delivery process successfully, faster and fully automated, no, it’s also about enabling the best practices in each of the SDLC areas, it’s about reviewing the current processes, reviewing how to optimize and collaborate on improving them.

Source Code Management: Is the team using the right source code repository given the nature of the of project? Does the repo allow the team to operate given their coding practices? Is the team practicing Code Reviews, Pull Request? Does the team have a specific branching strategy that allows them to deliver continuously, automate it and easy to manage?

There are plenty of possibilities nowadays, we are finding teams using:

- GIT

- GITHub

- TFVC

- Subversion

- Perforce

- Share folders (!)

Some of them are very well-known for most development teams and broadly adopted, easy to integrate in CI pipelines and build systems, like git or github. Others like Perforce are not very straightforward to work with but they have some advantages over git such as storing large binary files.

Then another aspect of the SCM model is about how to split (or not split) the different projects into one or multiple repos. We found that some teams prefer to have multiple repositories for the same product, each one for a different project or service so it can be easily shared with external teams, or just have a different business model associated and its own life cycle. Endless discussions about one repo vs multiple repos or use one repo with multiples git modules need to be always supported by its business cases, as it is not only up to the developers how they want to organise the source code, everyone in the team has said something about it.

One Repo vs Multiple Repos:

- Developers, Software Development Engineer in Tests and team members specialized on DevOps : Might prefer to have one repository only, easy to access, branch out, easy to generate build definitions, release in between branches, solve dependencies, etc. But some can disagree and say they don’t want to have too much noise in the same repo having multiple projects in it, specially if they have several commits per day coming from different teams.

- Business owners: they take a big part of this discussion as they know how those projects are going to be released, why, where, for how long, it here are going to be released as a SaaS, or PaaS or will just be a website. If the product or products will be sold as a white label product and then comes with a different business model. Sometimes they want to sell the IP but not all. According to these business cases the repo strategy will change.

Here some interesting articles that can help you to understand the differences between single or multiple repos.

Why you should use a single repository for all your company’s projects

http://blog.shippable.com/our-journey-to-microservices-and-a-mono-repository

Another critical part of the plan is the branching strategy and what is the purpose of those branches and most important, how changes are merged or delivered in between branches.

The strategy we chose for this particular project was quite simple (always try to keep it simple from the beginning , there is always time to add complexity):

Some interesting links that can help you decide what branching strategy could be adequate for you:

https://guides.github.com/introduction/flow/

https://docs.microsoft.com/en-us/vsts/tfvc/branch-strategically

https://docs.microsoft.com/en-us/vsts/articles/effective-tfvc-branching-strategies-for-devops

https://docs.microsoft.com/en-us/vsts/git/concepts/git-branching-guidance

https://docs.microsoft.com/en-us/vsts/tfvc/branching-strategies-with-tfvc

Project/Portfolio Management

What is the current project management tool the team is using? does it have full traceability end to end from the story conception, to the code written for it, to the deployment in production systems? Are any tests attached to these stories? Does the team have full visibility to the work taken on each of the areas through these tool? Is the tool chosen able to implement properly the process practices to follow? (Agile, Scrum, XP, Kanban, …)

Well, most of the teams lack of the ability to track the lifecycle of a story across the different development areas, we found most have preference for Atlassian Jira, others TFS/VSTS or Target Process, the thing is, not much of importance is how these tools are managing those stories (as more or less all of them allow you to do the very same things, just with different look and feel) but how they track the work done to complete those stories.

One example Atlassian Jira is capable of more than just create issues on nice Kanban boards, it can integrate fully Github so you can check the work done by the dev team, it has plugins to bring your test cases into them or even link them to the release pipelines for specific external tools.

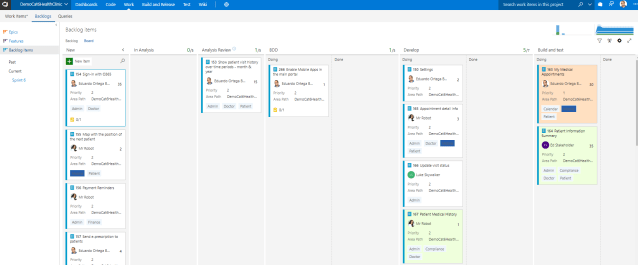

In our case we used Visual Studio Team Services as the main tool for project management. We migrated all the epics, stories and tasks from their legacy system (Target Process) to VSTS.

(images taken from local demo not real environment)

Build Management

What do we have to build? How often? Can we automate it? What technologies? What’s the output of it?

We need to define a plan around how we are going to build our software (input) and what is going to be the outcome of that. Factors to take in consideration:

- Build definitions: We need to think on what do we want to build, if we want to run tests on them and which ones, if static code analysis will be part of them, if we are going to build artefacts that will be deployed later on as a part of a CD pipeline, etc.

- Projects types to build: Web, Services, Apps, DataBases, Scripts, Mobile, etc.

- Frequency: how often we have to run those builds. Is on demand? Do we have nightly builds? are we implementing Continuous Integration at every branch level?

- Outcome: What do we want to generate as a part of the build definition output? Are we creating packages, binaries or any kind of artefacts? Do we want to run a set of tests and analyse the results? Are we storing these packages in an artefact library or shared folder?

- Artefacts: How are we versioning them? Are we creating Packages? How do we establish the quality of these artefacts?

- Build tool: Jenkins, Bamboo, TeamCity, TFS/VSTS, others?

- Build/Test/Deploy Agents: Can we host these agents locally or will be deployed in the cloud? How many do we need? Do then need different capabilities?

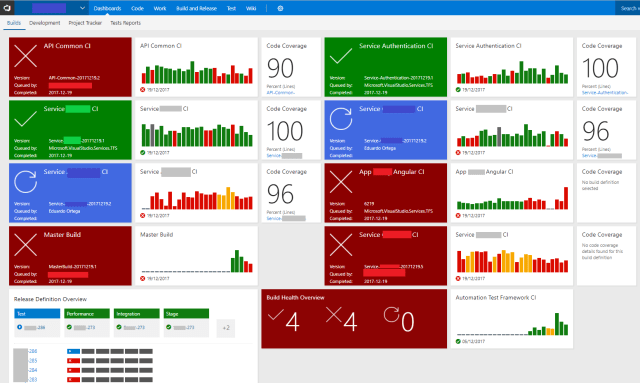

In our case we defined Automated Builds running on CI on every branch, filtering by projects paths, triggered by pull requests and also nightly builds.

The tool chosen for this was VSTS as it provided us all the above and also integration with SonarQube and was capable to build projects of different kind and technologies.

Release Management

Defining the release strategy can be something that would take some time define as it’s not only about creating a Delivery pipeline, but it’s more about what needs to be release, when, if will be released to the cloud or on-premises, if this release is manual or automated and how to automate it in case is manual, how we can make this process repeatable, etc.

Factors we take in consideration when defining the release strategy:

- What do we have to release? It could be just services, web, desktop, or mobile applications. It could be Infrastructure as Code such as deploying virtual machines, Docker containers, load balancers, it could be even databases!

- How are we going to release it? How this release is generated? Is this process automated? It requires any manual approval in any of the stages?

- What steps need to be taken for releasing our artefacts? What quality metrics and quality gates are we adding to this process?

- Do we have a rollback plan? Do we a have disaster recovery plan?

- What environments I’m going to need for releasing my product? Which teams will use them? Will they be static or dynamically generated?

- Will be deployed locally or in the cloud?

In our case we had to deploy them all, web apps, services, databases, infrastructure, environments, all of it, and our target environment for those was Microsoft Azure. For this project in particular it was Azure SaaS such as Azure App Services, Azure Elastic Pools and Azure API Management. There was a component of IaaS which was about virtual machines, network infrastructure, hybrid infrastructure, containers, etc. Which was more focused with interoperability with legacy systems.

For controlling these releases and deployments we used VSTS Release Management which also allowed us to enabled Continous Deployment and easily visualize which versions of our releases are being deployed and where.

Test Management and Quality Gates

I’m not going to deep into this as it might be a good topic for another blog post, but we can give you some insights about what were the main quality gates and test management we took in consideration when reviewing the Test Management and Test Automation strategy followed by our client.

Absolutely priority number one to move towards agile practices and DevOps implementations was Automate all the testing all over the SDLC.

The main quality gates we proposed were:

- At story conception level: Have agreed definition of done and for every story a well-defined acceptance criteria, written on Gherkin syntax to help the dev and test engineers to implement test properly using BDD and TDD practices.

- Code Reviews, Pull Requests on every merge operation.

- Code Coverage: 100% code needs to be covered by unit tests.

- Test Automation for UI, API, DataBase testing, Performance test, Smoke tests and others, all integrated the CI – CD pipelines at different stages, results automatically collected and asserted as Quality Gates.

- Regression testing happening on nightly builds.

- Code Analysis rules for coding practices when building.

- SecOps practiced integrating SAST and DAST tools as part of the CI – CD pipelines

Manual testing is out of discussion. But if you are not on a green field and still have to deal with manual testing (we had some legacy projects where manual testing was still a must) then VSTS also provides a nicely done solution for test management, which gets complemented with a Windows client (Microsoft Test Manager) and with Test and Feeback tool for browsers.

3) Implementation

After we agreed and sign off all the strategy plans for:

- Branching strategy

- Repository strategy

- Build strategy

- Release strategy

- Environments architecture

- Test Automation strategy

And we discussed about other topics such as SecOps, packages, monitoring and alerts, hot fixes approach, cloud costs, etc, we starting implementing on every area, starting by Repo, Branching and Build strategies.

We have to say that thanks to spend a proper 60% of the time on planning and strategies discussions, the whole implementation took no more than 30% of the time.

4) Adoption

For teams that never worked on a fully automated environment where lots of DevOps practices are applied, can be difficult to absorb from one day to another. We solve this problem having different members of the team, specialized on different areas of expertise, shadowing us during the implementation phase.

We also had one recorded KT session over every delivery plan and invited the whole team (and external teams too) to be part of those sessions.

And last but not least left behind some training materials and guides to help them on fully develop their capabilities around the new DevOps tools and processes.

Some teams prefer us to organise some Agile Workshops for over 3 days adding some additional days for tools and technical Q&A sessions.

5) Support

Last but not least, our modus operandi is to engage, prepare, plan, deploy, share and guide and finally give support, guiding these teams for few weeks over the different areas of work, ensuring they are self-sufficient to start working on an agile manner with the new processes and tools. Then we periodically contact them to see how much they have matured on the different areas previously measured during our pre-engagement.

Summarising, it has been such a nice experience to participate on such project, defining the whole DevOps strategy from the very beginning and see it flourish over the time.

We have made other journeys of different nature, different technologies but with mostly the same approach and same outcome.

And remember, DevOps is not a tool, is not a guide, is not a methodology, is a Journey.

Pingback: What the duck is DevOps? | EOBlog